World models (AI algorithms that can generate simulated environments in real time) are one of the most impressive applications of machine learning. There’s been a lot of movement in this space over the last year, which is why Google DeepMind announced Genie 2 on Wednesday. While the previous model was limited to generating 2D worlds, the new model allows you to create 3D worlds and maintain them for significantly longer.

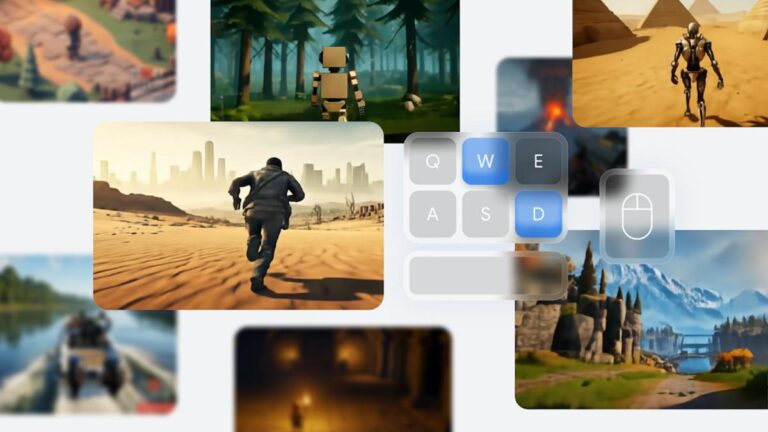

Genie 2 is not a game engine. Instead, it is a diffusion model that generates images as the player (human or another AI agent) moves through the world that the software is simulating. Genie 2 infers ideas about the environment when generating frames, providing the ability to model water, smoke, and physical effects. However, some of those interactions can be very game-like. Additionally, the model is not limited to rendering scenes from a third-person perspective; it can also handle first-person and isometric views. All you need to get started is a single image prompt, either provided by Google’s own Imagen 3 model or a real-world image.

Introducing Genie 2: an AI model that lets you create an infinite variety of playable 3D worlds, all from a single image. 🖼️

Such large-scale underlying world models could allow future agents to be trained and evaluated in countless virtual environments. →… pic.twitter.com/qHCT6jqb1W

— Google DeepMind (@GoogleDeepMind) December 4, 2024

In particular, Genie 2 remembers parts of a simulated scene even after they leave the player’s field of vision, allowing it to accurately reconstruct those elements when they appear again. This is in contrast to other world models like Oasis. Oasis, at least in the version Decart released to the public in October, had trouble remembering the layouts of the Minecraft levels it generated in real time.

However, there are even limits to what the Genie 2 can do in this regard. DeepMind says its model can generate a “consistent” world for up to 60 seconds, and most of the examples the company shared on Wednesday can be run in significantly less time. In this case, most videos are around 10-20 seconds long. Additionally, the longer Genie 2 needs to maintain the illusion of a consistent world, the more artifacts are introduced and image quality degrades.

DeepMind did not elaborate on how it trained Genie 2, other than to say it relied on “large-scale video datasets.” Don’t expect DeepMind to make Genie 2 publicly available any time soon. For now, the company is primarily using the model as a tool for training and evaluating other AI agents, including its own SIMA algorithm, and as something that artists and designers can use to prototype and quickly try out ideas. I’m thinking about it. In the future, DeepMind suggests that world models like Genie 2 are likely to play a key role on the path to artificial general intelligence.

“Training more general embodied agents has traditionally been bottlenecked by the availability of sufficiently rich and diverse training environments,” DeepMind said. “As we have shown, Genie 2 will enable future agents to be trained and evaluated in a new, world-bound curriculum.”