At this point, you’re probably loving the idea of using generative AI to create realistic videos, or perhaps you’re thinking about the scourge of deepfakes, a morally bankrupt endeavor that devalues artists and that you’ll never escape. Perhaps they believe that it will usher in a new era. It’s hard to find a middle ground. Meta doesn’t intend to change its mind about its latest video creation AI model, Movie Gen, but no matter what you think about AI media creation, it could end up being an important milestone for the industry.

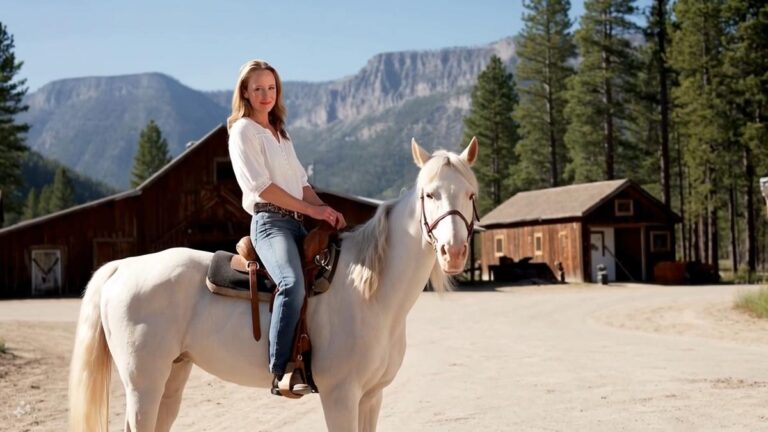

Movie Gen lets you create realistic videos at up to 1080p (upscaled from 768 x 768 pixels) at 16 fps or 24 fps with music and sound effects. You can also generate a personalized video by uploading a photo. And importantly, it seems like you can easily edit videos using simple text commands. Notably, you can also edit regular non-AI videos that contain text. It’s easy to see how this could help you clean up the stuff you take on your phone for Instagram. Movie Gen is purely research at this point. The meta won’t be available to the public, so you’ll have some time to think about what that means.

The company describes Movie Gen as the “third wave” of generative AI research, following earlier media creation tools like Make-A-Scene and recent products using Llama AI models . It has a 30 billion parameter transformer model and can create 16 seconds long 16 fps video or 10 seconds long 24 fps video. It is also equipped with an audio model with 13 billion parameters, and can synchronize 48kHz content such as “environmental sounds, sound effects (Foley), and musical instrument BGM” with video for 45 seconds. The Movie Gen team writes in a research paper that there is no synchronous audio support yet “due to our design choices.”

Meta

Mehta said Movie Gen was initially trained on a “combination of sanctioned public datasets,” including about 100 million videos, 1 billion images, and 1 million hours of audio. The company’s wording on procurement is a little vague. Meta has already admitted that it has trained its AI models based on data from all of its users’ accounts in Australia, but it’s even less clear what the company is using outside of its own products.

As for the actual video, Movie Gen certainly looks impressive at first glance. According to Meta, in its own A/B testing, people generally liked the results compared to OpenAI’s Sora and Runway Gen3 models. Movie Gen’s AI humans look surprisingly realistic, without the noticeable signs that often occur in AI videos (especially the eyes and fingers getting in the way).

Meta

“While there are many interesting use cases for these underlying models, it is important to note that generative AI does not replace the work of artists and animators,” the Movie Gen team wrote in a blog post. “We are sharing this research because we believe in the power of this technology to help people express themselves in new ways and provide opportunities to those who would not otherwise have them. .”

However, it is still unclear how mainstream users will utilize the generated AI videos. Will we fill our feed with AI videos instead of taking photos and videos ourselves? Or will Movie Gen be broken down into individual tools to help us power our own content? You can easily remove objects from the background of a photo on your smartphone or computer, and more advanced AI video editing seems like the next logical step.