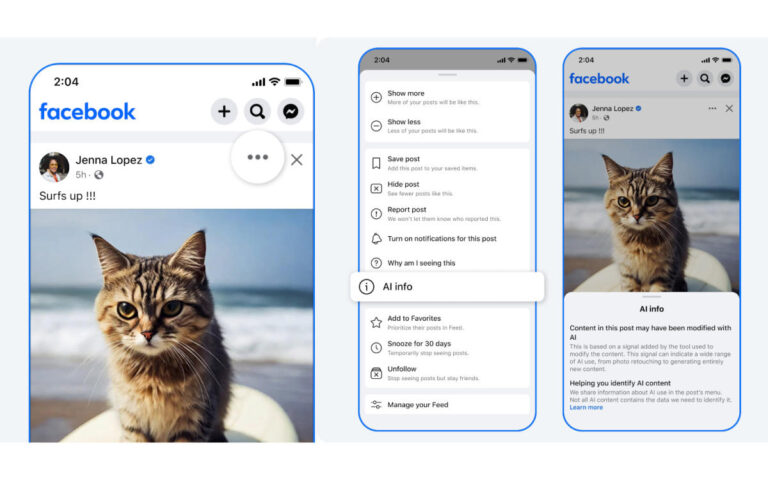

Starting next week, Meta will no longer add visible labels to Facebook images that have been edited using AI tools, making it much harder to tell if they’re pristine or doctored. To be clear, the company will still add annotations to AI-edited images, but you’ll need to tap the three-dot menu in the top-right corner of any Facebook post and scroll down to find “AI Info” among many other options. Only then will you see the annotation that the content of the post may have been altered by AI.

However, images generated using AI tools will have an “AI Info” label visible on the post. Clicking on it will reveal a note indicating whether it was labeled due to shared industry signals or because someone self-identified it as an AI-generated image. Meta began applying AI-generated content labels to a broader range of videos, audio and images earlier this year. But after widespread complaints from photographers that the company was also falsely flagging content that wasn’t AI-generated, Meta changed the wording of the “Made with AI” label to “AI Info” by July.

The company said it worked with companies in the industry to improve its labeling process, and that the change was made to “better reflect the extent of AI used on content.” Still, manipulated images have been widely used to spread misinformation in recent times, and the rollout could make it harder to identify fake news, which is rife this election season.