In iOS 18.2, Apple is adding new features that reinstate some of the intent behind the suspended CSAM scanning scheme. This time, we won’t be breaking end-to-end encryption or providing government backdoors. The company’s communications safety enhancements, which will be rolled out first in Australia, use on-device machine learning to detect and blur nude content, add warnings and require confirmation before users proceed. If your child is under 13, you must enter your device’s Screen Time passcode to continue.

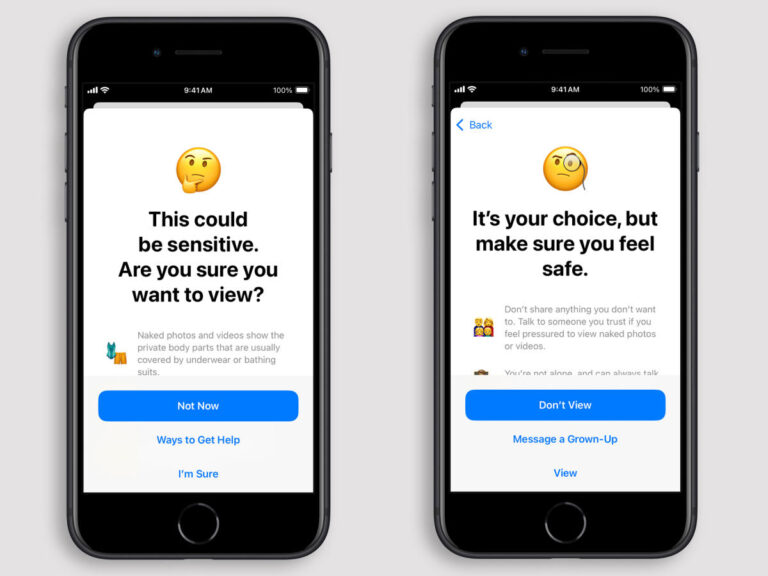

If your device’s onboard machine learning detects nude content, the feature will automatically blur your photo or video, display a warning that the content may be sensitive, and provide instructions on how to seek help. Provide. Options include leaving the conversation or group thread, blocking the user, accessing online safety resources, and more.

The feature also displays a message to reassure your child that it’s okay to leave the chat without seeing the content. There is also an option to send a message to a parent or guardian. If your child is 13 or older, you can confirm whether they want to continue after receiving the warning by reminding them repeatedly that it is safe to opt out and that further assistance is available. It also includes an option to report images and videos to Apple, according to The Guardian.

apple

This feature analyzes photos and videos in messages, AirDrops, contact posters (on your phone or in the Contacts app), and FaceTime video messages on your iPhone and iPad. In addition, “some third-party apps” will also be scanned when you select a photo or video that your child shares.

Supported apps vary slightly on other devices. On your Mac, Messages and some third-party apps are scanned when you choose content to share. On Apple Watch, this includes Messages, Contacts posters, and FaceTime video messages. Finally, on Vision Pro, this feature scans Messages, AirDrop, and some third-party apps (with the same conditions as above).

This feature requires iOS 18, iPadOS 18, macOS Sequoia, or visionOS 2.

The Guardian reports that Apple plans to expand this globally after the Australian trial. There’s probably a special reason why the company chose the Down Under site. That means the country will introduce new regulations requiring big tech companies to crack down on child abuse and terrorist content. As part of the new rules, Australia agreed to omit the requirement to compromise security by breaking end-to-end encryption and add a clause requiring it only “where technically possible.” Businesses have until the end of the year to comply.

User privacy and security have been at the center of controversy surrounding Apple’s infamous attempts to crack down on CSAM. In 2021, it prepared to introduce a system that would scan images of online sexual abuse and send them to human judges. (This was something of a shock, given Apple’s history of standing up to the FBI in attempts to unlock iPhones owned by terrorists.) Privacy and security experts say the feature could help authoritarian regimes spy on their citizens. He claimed that it would open a backdoor for. In situations where there is no exploitative content. The following year, Apple abandoned this feature, leading (indirectly) to the more balanced child safety features announced today.

Once rolled out globally, you can enable this feature in (Settings) > (Screen Time) > (Communication Safety) and toggle the option on. This section is enabled by default starting with iOS 17.