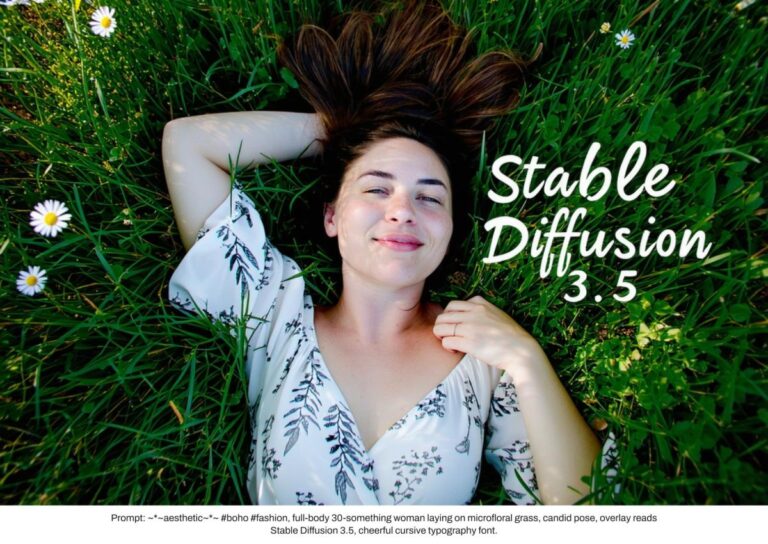

Stable Diffusion, an open source alternative to AI image generators like Midjourney and DALL-E, has been updated to version 3.5. The new model attempts to right some of the wrongs (that may be an understatement) of the widely acclaimed Stable Diffusion 3 Medium. According to Stability AI, the 3.5 model is more faithful to prompts than other image generators, and competes with much larger models in output quality. Additionally, it’s tailored to a wider variety of styles, skin tones, and features without explicitly telling you to.

The new model is available in three flavors. The Stable Diffusion 3.5 Large is the most powerful of the trio, has the best quality of the bunch, and leads the industry in fast compliance. Stability AI says this model is suitable for professional use with a resolution of 1 MP.

The Stable Diffusion 3.5 Large Turbo, on the other hand, is a “distilled” version of the larger model that emphasizes efficiency over maximum quality. According to Stability AI, the Turbo variant still produces “high-quality images with very quick compliance” in four steps.

Finally, Stable Diffusion 3.5 Medium (2.5 billion parameters) is designed to run on consumer hardware and strikes a balance between quality and simplicity. Easily customizable, this model can produce images with a resolution of 0.25 to 2 megapixels. However, unlike the first two models currently available, the Stable Diffusion 3.5 Medium won’t be in stock until October 29th.

The new trio follows the failed Stable Diffusion 3 Medium in June. The company admitted that the release “didn’t quite meet our standards or the expectations of our community,” as it created a hilariously grotesque piece of body horror in response to a prompt that didn’t ask for such a thing. It is probably no coincidence that Stability AI repeatedly mentions exceptional instant compliance in today’s announcement.

Stability AI was only briefly mentioned in the announcement blog post, but the 3.5 series includes new filters to better reflect human diversity. The company describes the new model’s human output as “representative of the world, not just one type of human, with a variety of skin tones and features without the need for extensive prompting.” .

Hopefully, unlike Google’s fiasco earlier this year, it’s sophisticated enough to account for subtleties and historical sensitivities. Unprompted, Gemini created a collection of grossly inaccurate historical “photos” of ethnically diverse Nazis and American Founding Fathers. The backlash was so strong that Google didn’t reintroduce the human generation until six months later.